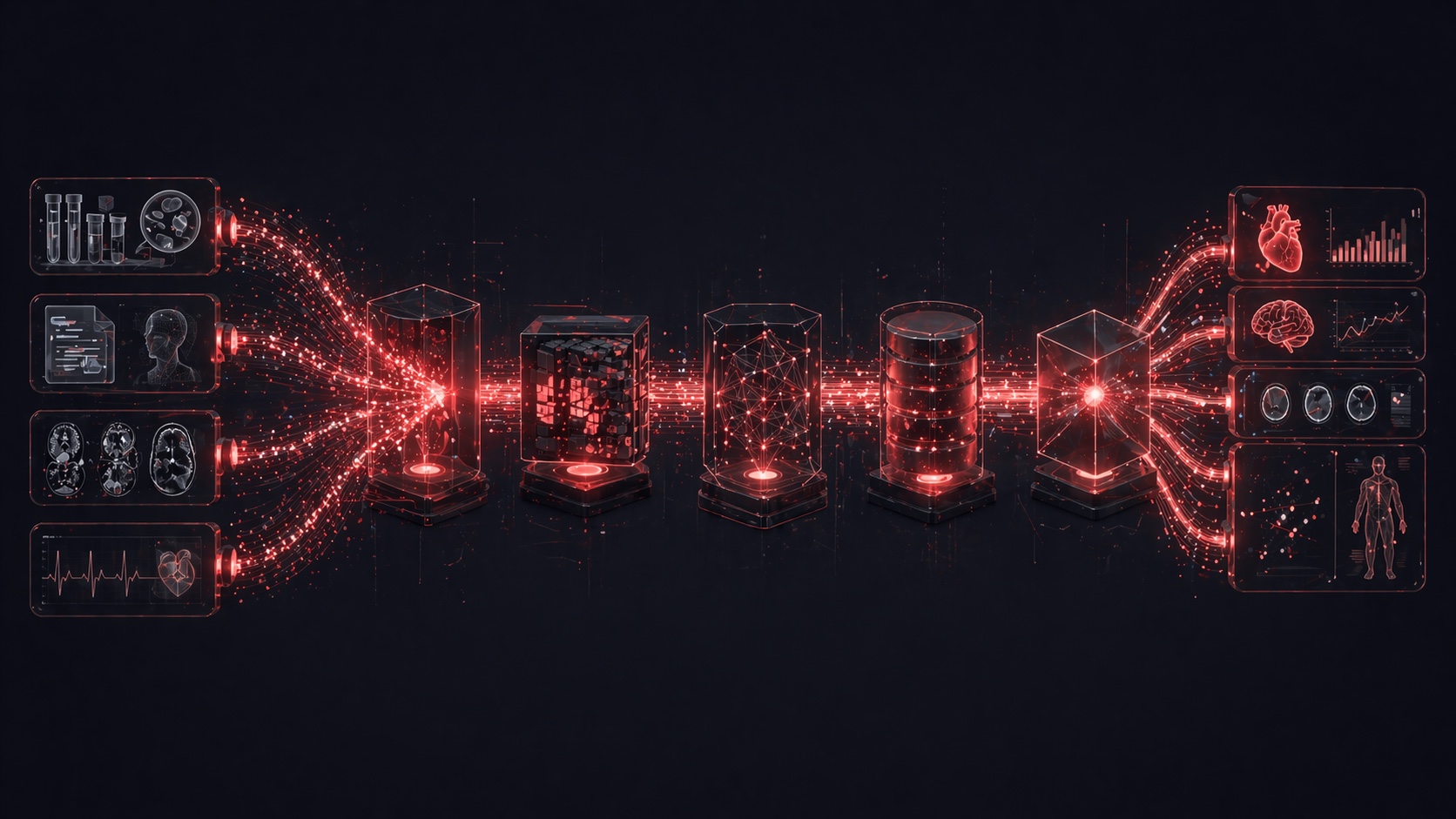

What is a healthcare data pipeline?

A healthcare data pipeline is the architecture that ingests, processes, validates, and delivers clinical data in real-time so that care teams can act on insights immediately instead of relying on delayed batch systems.

Why most clinical data arrives too late

Your clinical data is often hours old by the time anyone sees it.

A critical lab result may:

- Be processed in minutes

- But take hours to reach dashboards

In an ICU, that delay is not operational — it is clinical risk.

This problem is rooted in outdated integration patterns, as explained in EHR integration architecture.

The healthcare data pipeline architecture (5 layers)

Layer 1: Ingestion

Healthcare data comes from multiple sources:

- HL7 v2 messages

- FHIR APIs

- Custom APIs

- File drops (CSV, XML)

- Device streams

Ingestion pattern

All sources should be transformed into a canonical format:

Source → Parser → Transform → Event Bus

Key principle:

Normalize to FHIR at ingestion

This avoids downstream complexity and aligns with modern healthcare data architecture standards.

Layer 2: Processing — streaming vs batch

Streaming (real-time)

Used for:

- Critical alerts

- Live dashboards

- Clinical decision triggers

Tech:

- Kafka

- Flink

- Kinesis

Batch (scheduled)

Used for:

- Reporting

- Population health

- Financial analytics

Tech:

- Spark

- dbt

- Data Factory

Recommended: hybrid architecture

Event Bus → Streaming → Real-time store → Alerts & dashboards

Event Bus → Batch → Data lake → Analytics

This hybrid model is foundational in AI-powered clinical decision systems.

Layer 3: Data quality and validation

Healthcare data must be validated across four dimensions:

Structural validation

Schema, required fields, data types

Referential validation

Patient IDs, provider matching

Clinical validation

Biological plausibility (e.g., lab ranges)

Deduplication

Handling retransmissions and retries

Validation pipeline

Data → Validators → Clean Store

Failures → Queues (error, review, duplicates)

This prevents corrupted data from reaching clinical systems.

Layer 4: Storage architecture

Hot store (real-time)

- FHIR databases

- Low latency

- Last 30–90 days

Used for:

- Clinical apps

- APIs

- Dashboards

Warm store (analytics)

- Columnar storage (Parquet)

- 1–5 years of data

Used for:

- Reporting

- Operational analytics

Cold store (archive)

- Long-term retention

- Compliance (HIPAA 6+ years)

Layer 5: Insight delivery

Data only matters if it reaches the right person at the right time.

Real-time alerts

Critical values delivered within seconds

Operational dashboards

Refreshed every 30–60 seconds

Clinical decision support

Integrated via FHIR workflows

Population health

Updated daily or weekly

Executive reporting

Daily + monthly summaries

These capabilities depend on the same foundation required for HIPAA-compliant AI systems.

Implementation roadmap

Weeks 1–4

- Deploy Kafka

- Connect EHR, lab, ADT

- Define FHIR model

Months 2–3

- Real-time alerts

- Hot store deployment

- First dashboards

Months 3–6

- Add sources

- Build batch pipeline

- Deploy analytics

Month 6+

- Clinical decision support

- AI/ML models

- Population health

The Bottom Line

Healthcare does not have a data problem.

It has a latency problem.

The difference between a batch pipeline and a real-time pipeline is the difference between:

- Knowing what happened

- Acting when it matters

What HyperTrends Builds

HyperTrends designs healthcare data pipelines:

- Multi-source ingestion

- Real-time streaming

- FHIR transformation

- Data validation

- Insight delivery

Ready to move from delayed data to real-time clinical insight?

Schedule a consultation and design your healthcare data pipeline.